Web Scraping Prevention Techniques

Many websites prohibit web scraping and use anti-scraping measures to block automated data extraction. These protections can make it challenging and time-consuming to scale scraping activities. For instance, if a script sends requests too frequently (like once every second), the website may block those requests or display a message asking the user to slow down or try again later.

Fingerprinting

Fingerprinting is a technique used to identify and track clients based on detailed technical information such as IP addresses, user-agent strings, browser versions, operating systems, screen resolutions, installed fonts, and even hardware characteristics. By combining these signals, websites can create a unique “fingerprint” for each visitor. If multiple requests appear to originate from the same fingerprint in an automated pattern, the system can flag or block them, even if the IP address changes.

Example

from http.server import BaseHTTPRequestHandler, HTTPServer # import base classes for HTTP server

from time import time # import time function for request timing

requests = {} # dictionary to store request history per fingerprintclass CustomHandler(BaseHTTPRequestHandler): # define request handler class

def do_GET(self): # handle GET requests

now = time() # current timestamp

ip = self.client_address[0] # get client IP address

user_agent = self.headers.get(“User-Agent”, “”) # browser info

accept_lang = self.headers.get(“Accept-Language”, “”) # language preference

encoding = self.headers.get(“Accept-Encoding”, “”) # compression support

fingerprint = f”{ip}{user_agent}|{accept_lang}|{encoding}” # create a simple fingerprint using IP + headers

requests[fingerprint] = [t for t in requests.get(fingerprint, []) if now – t < 10] # keep only requests from last 10 seconds for this fingerprint

requests[fingerprint].append(now) # log current request timeif len(requests[fingerprint]) > 5: # if too many requests in time window, block client

self.send_response(429) # HTTP status: Too Many Requests

self.send_header(‘Content-type’, ‘text/plain’) # response type

self.end_headers() # finish HTTP headers

self.wfile.write(f”Fingerprint:{fingerprint} – Too many requests…”.encode(“utf-8”)) # send blocked message with fingerprint info

else:

self.send_response(200) # HTTP OK

self.send_header(‘Content-type’, ‘text/plain’) # response type

self.end_headers() # finish headers

self.wfile.write(f”Fingerprint:{fingerprint} – Server Running…”.encode(“utf-8”)) # send normal response with fingerprint inforeturn # end request handling

HTTPServer((“”, 8085), CustomHandler).serve_forever() # start server on port 8080 and run forever

from http.server import BaseHTTPRequestHandler, HTTPServer

from time import time

requests = {}

class CustomHandler(BaseHTTPRequestHandler):

def do_GET(self):

now = time()

ip = self.client_address[0]

user_agent = self.headers.get("User-Agent", "")

accept_lang = self.headers.get("Accept-Language", "")

encoding = self.headers.get("Accept-Encoding", "")

fingerprint = f"{ip}{user_agent}|{accept_lang}|{encoding}"

requests[fingerprint] = [t for t in requests.get(fingerprint, []) if now - t < 10]

requests[fingerprint].append(now)

if len(requests[fingerprint]) > 5:

self.send_response(429)

self.send_header('Content-type', 'text/plain')

self.end_headers()

self.wfile.write(f"Fingerprint:{fingerprint} - Too many requests...".encode("utf-8"))

else:

self.send_response(200)

self.send_header('Content-type', 'text/plain')

self.end_headers()

self.wfile.write(f"Fingerprint:{fingerprint} - Server Running...".encode("utf-8"))

return

HTTPServer(("", 8080), CustomHandler).serve_forever()Authentication

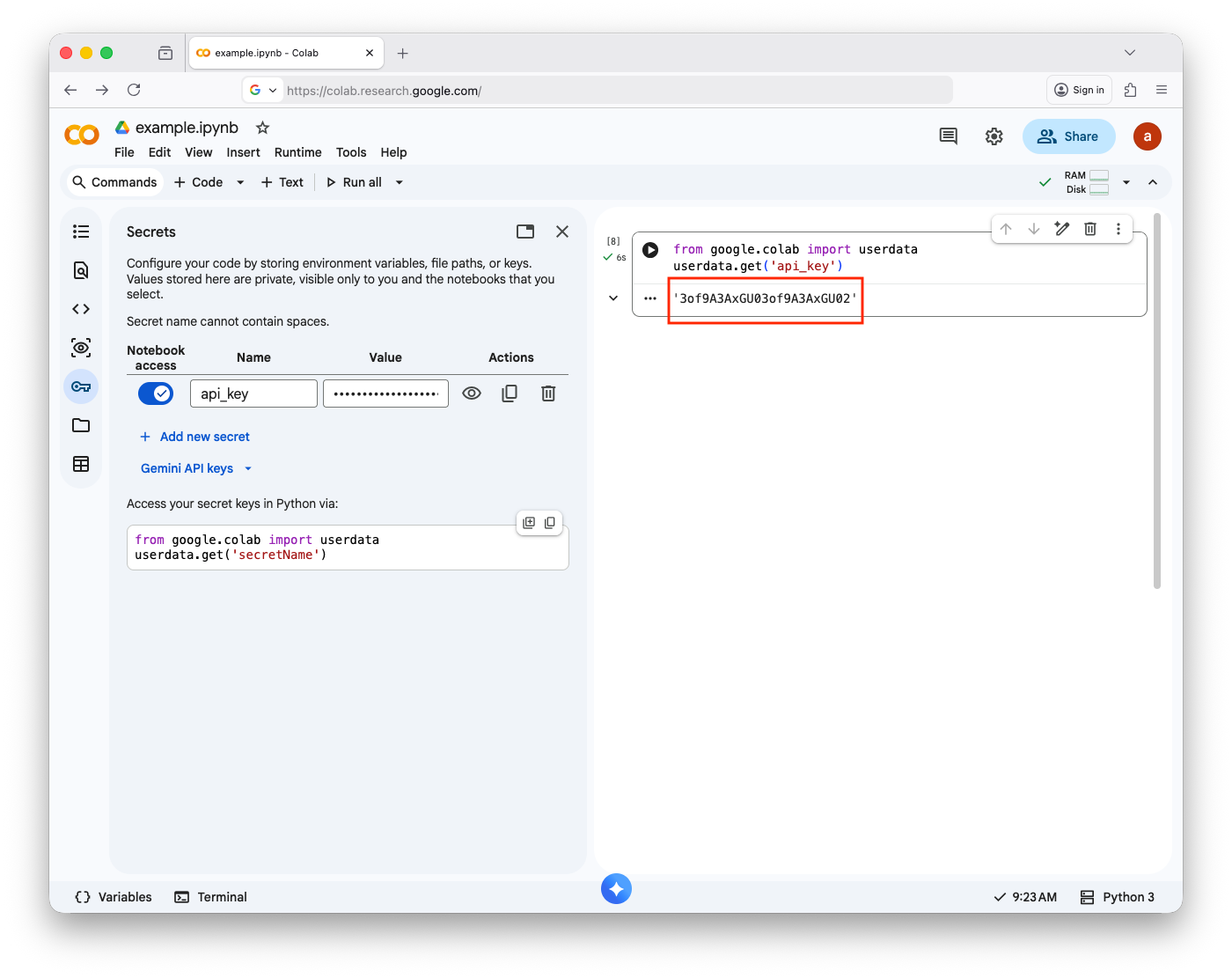

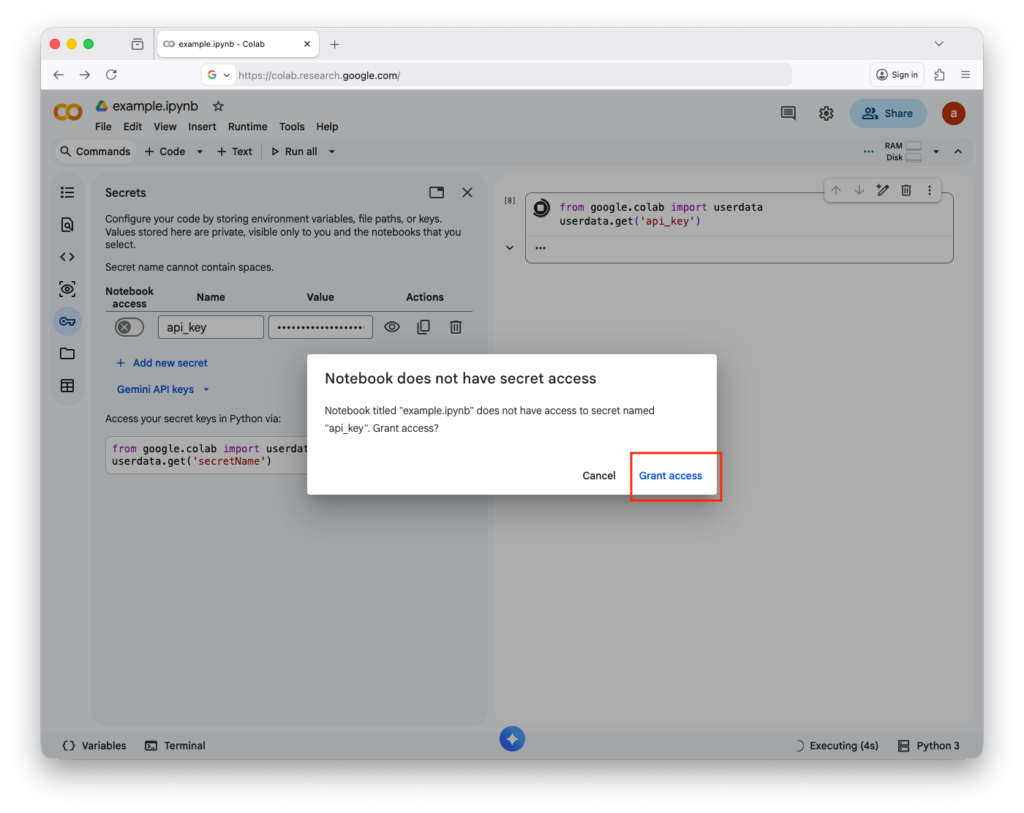

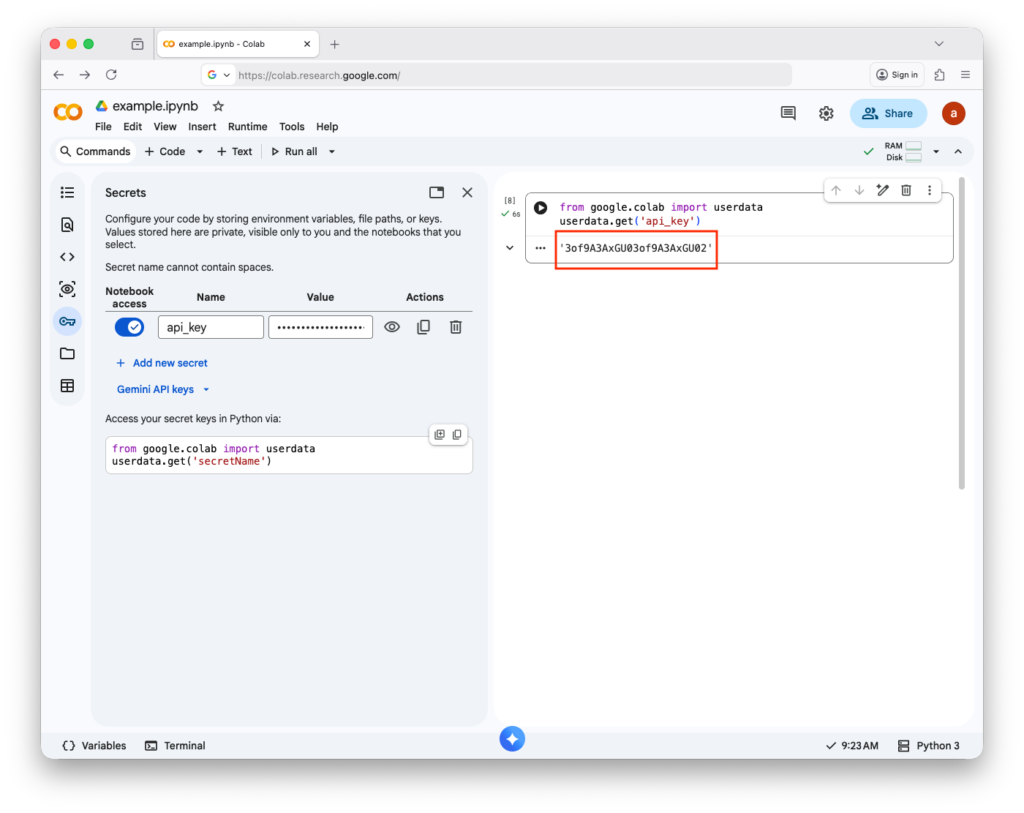

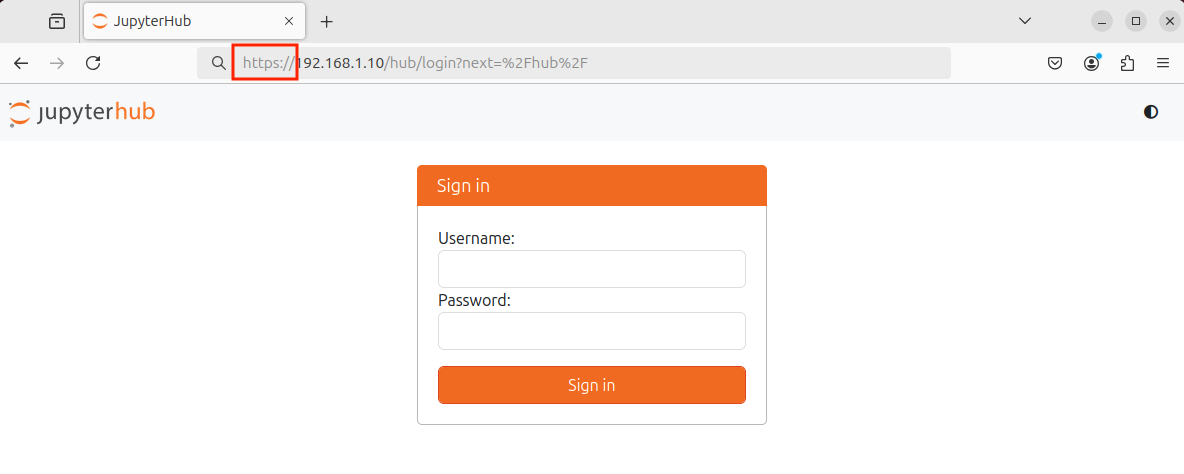

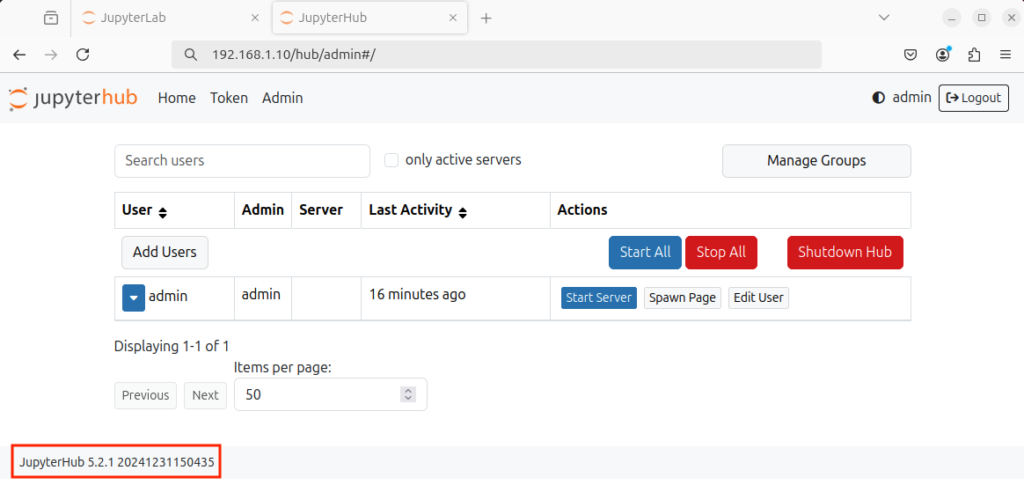

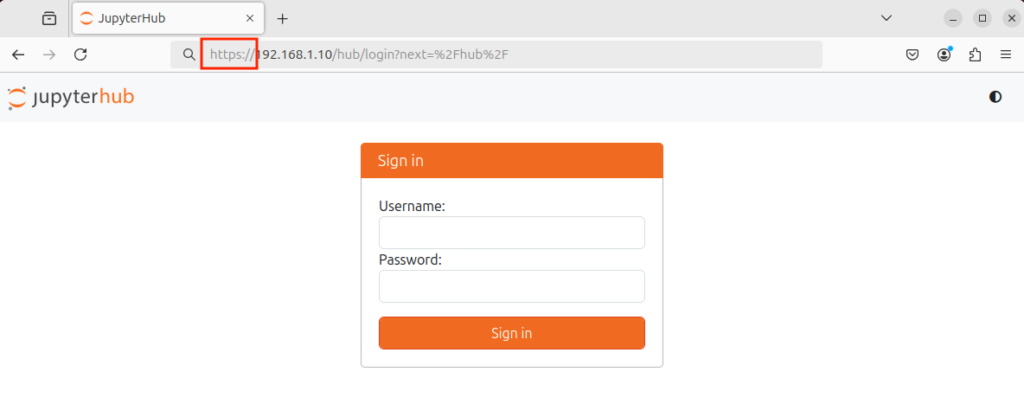

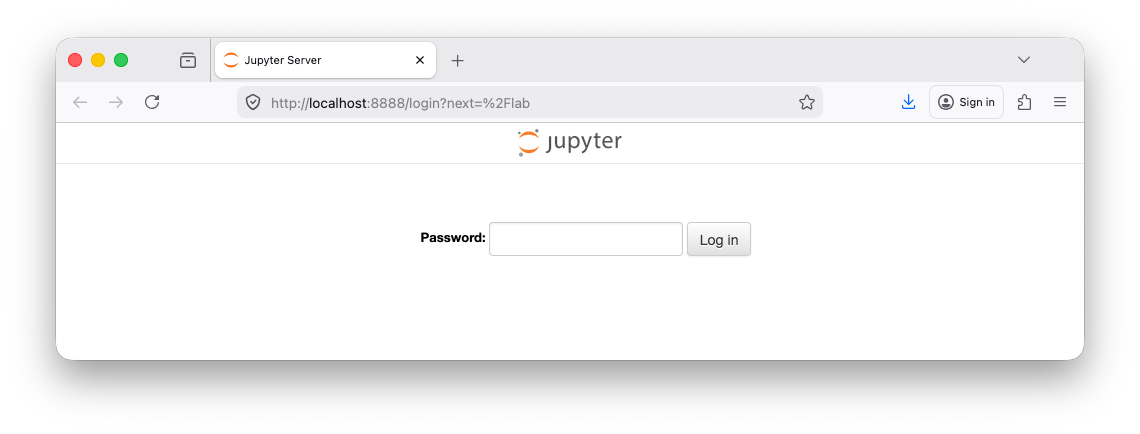

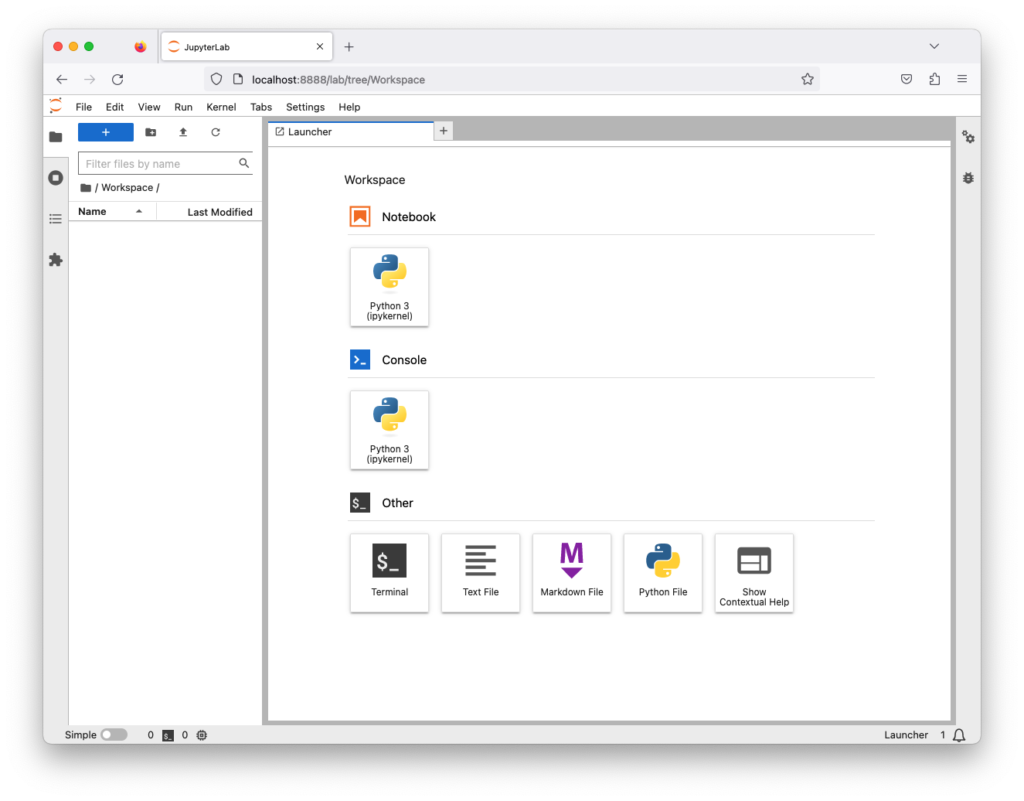

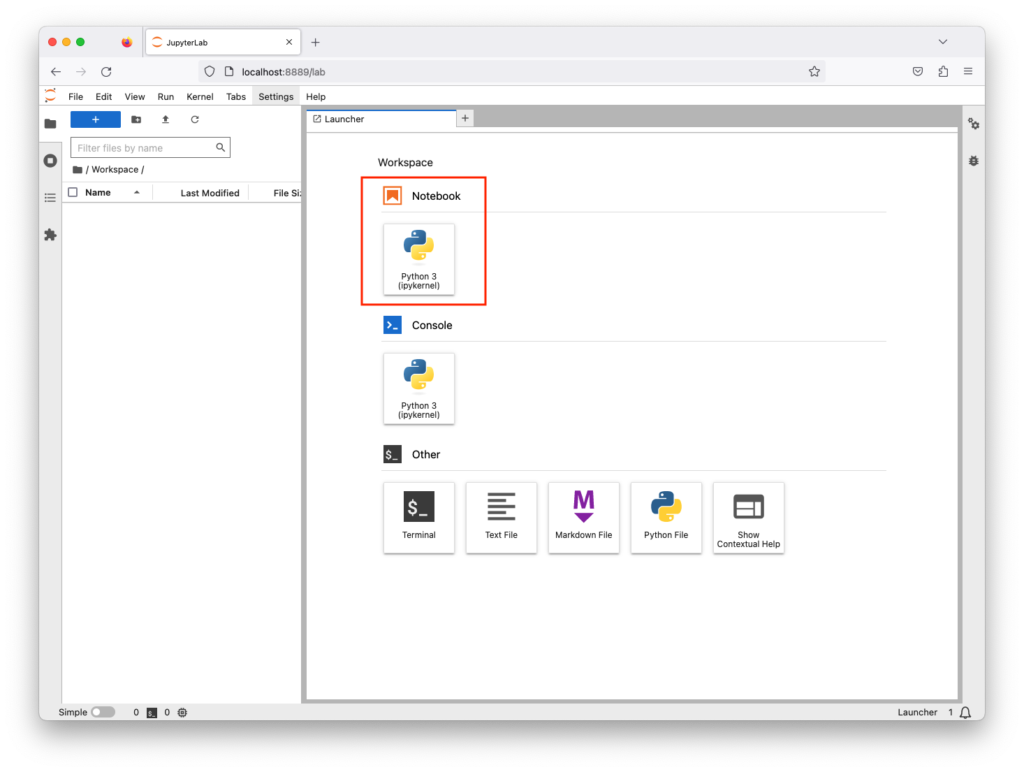

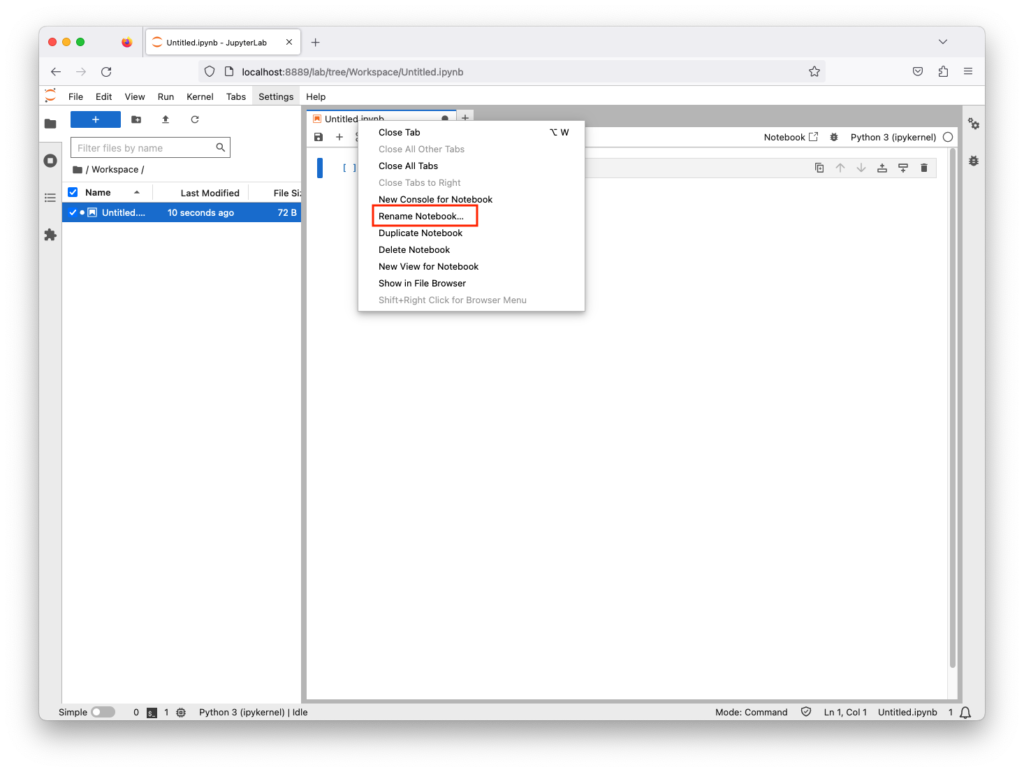

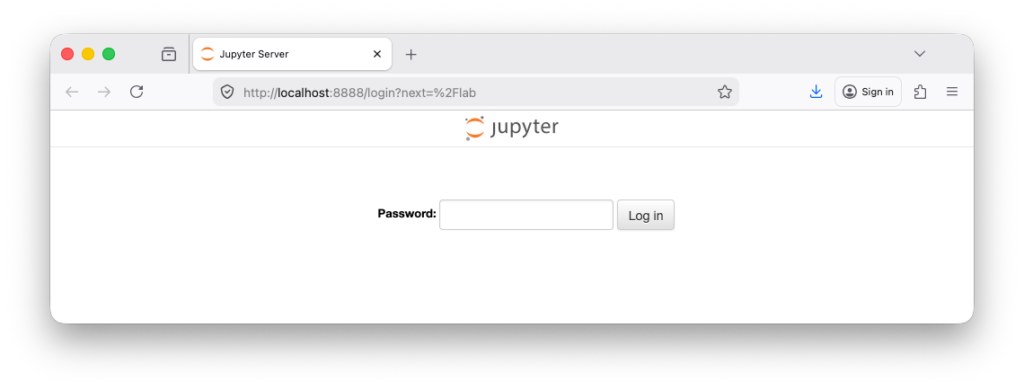

Authentication systems require users to verify their identity before accessing content. This is often achieved through login pages, API keys, or session tokens. By requiring users to authenticate, websites can better control who accesses their data and monitor usage per account. This also allows them to enforce limits on a per-user basis rather than per IP address, making scraping more challenging.

Example

from http.server import BaseHTTPRequestHandler, HTTPServer # import basic HTTP server classes

api_keys = {“Example-6C324086-6B3B-48D5-9FEE-4A30C66B70CC”:[“ip”:””,”user”,””]} # dictionary storing valid API keys and optional metadata (invalid Python dict syntax for nested list here)class CustomHandler(BaseHTTPRequestHandler): # define request handler class

def do_GET(self): # handle GET requests

api_key = self.headers.get(“X-API-Key”, “”) # extract API key from request headers

if api_key not in api_keys: # check if API key is invalid or missing

self.send_response(401) # return HTTP 401 Unauthorized

self.send_header(‘Content-type’, ‘text/plain’) # set response content type

self.end_headers() # finish HTTP headers

self.wfile.write(b”Authentication required”) # send authentication error message

else: # if API key is valid

self.send_response(200) # return HTTP 200 OK

self.send_header(‘Content-type’, ‘text/plain’) # set response content type

self.end_headers() # finish HTTP headers

self.wfile.write(b”Server Running…”) # send success response message

return # end request handlingHTTPServer((“”, 8080), CustomHandler).serve_forever() # start server on port 8080 and run forever

from http.server import BaseHTTPRequestHandler, HTTPServer

api_keys = {"Example-6C324086-6B3B-48D5-9FEE-4A30C66B70CC":["ip":"","user",""]}

class CustomHandler(BaseHTTPRequestHandler):

def do_GET(self):

api_key = self.headers.get("X-API-Key", "")

if api_key not in api_keys:

self.send_response(401)

self.send_header('Content-type', 'text/plain')

self.end_headers()

self.wfile.write(b"Authentication required")

else:

self.send_response(200)

self.send_header('Content-type', 'text/plain')

self.end_headers()

self.wfile.write(b"Server Running...")

return

HTTPServer(("", 8080), CustomHandler).serve_forever()Challenges (CAPTCHA)

CAPTCHA tests are designed to differentiate humans from bots. They may involve identifying distorted text, selecting images, solving puzzles, or performing simple interactive tasks. Since most automated scripts struggle with these challenges, CAPTCHA serves as an effective barrier to prevent large-scale scraping or automated form submissions.

Example

from http.server import BaseHTTPRequestHandler, HTTPServer # HTTP server framework

from random import randint # generate random numbers for CAPTCHA

from uuid import uuid4 # generate unique session ID for each CAPTCHA

captcha_db = {} # store captcha_id -> correct answer mappingclass Handler(BaseHTTPRequestHandler): # request handler class

def do_GET(self): # handle GET requests (show CAPTCHA page)

random_a = randint(1, 10) # first random number

random_b = randint(1, 10) # second random number

captcha_id = str(uuid4()) # create unique ID for this CAPTCHA session

captcha_db[captcha_id] = str(random_a + random_b) # store correct answer on server

self.send_response(200) # HTTP 200 OK

self.send_header(“Content-type”, “text/html”) # response is HTML page

self.end_headers() # finish headers

# send HTML form to user

self.wfile.write(f”””

<html>

<body>

<h3>CAPTCHA: What is {random_a} + {random_b}?</h3>

<form method=”POST”>

<input name=”answer” type=”text”>

<input type=”hidden” name=”captcha_id” value=”{captcha_id}”>

<input type=”submit” value=”Submit”>

</form></body>

</html>

“””.encode())def do_POST(self): # handle form submission

length = int(self.headers.get(‘Content-Length’)) # get size of request body

data = self.rfile.read(length).decode() # read and decode form data

fields = dict(x.split(“=”) for x in data.split(“&”)) # parse form fields

user_answer = fields.get(“answer”, “”) # user submitted answer

captcha_id = fields.get(“captcha_id”, “”) # session id from form

correct_answer = captcha_db.get(captcha_id, “”) # get stored correct answer

self.send_response(200) # HTTP OK

self.send_header(“Content-type”, “text/plain”) # plain text response

self.end_headers() # finish headers

if user_answer == correct_answer: # check if answer is correct

self.wfile.write(b”CAPTCHA passed”) # success message

else:

self.wfile.write(b”CAPTCHA failed”) # failure messagedel captcha_db[captcha_id] # remove CAPTCHA after attempt (single-use)

HTTPServer((“”, 8080), Handler).serve_forever() # start server on port 8080

from http.server import BaseHTTPRequestHandler, HTTPServer

from random import randint

from uuid import uuid4

captcha_db = {}

class Handler(BaseHTTPRequestHandler):

def do_GET(self):

random_a = randint(1, 10)

random_b = randint(1, 10)

captcha_id = str(uuid4())

captcha_db[captcha_id] = str(random_a + random_b)

self.send_response(200)

self.send_header("Content-type", "text/html")

self.end_headers()

self.wfile.write(f"""

<html>

<body>

<h3>CAPTCHA: What is {random_a} + {random_b}?</h3>

<form method="POST">

<input name="answer" type="text">

<input type="hidden" name="captcha_id" value="{captcha_id}">

<input type="submit" value="Submit">

</form>

</body>

</html>

""".encode())

def do_POST(self):

length = int(self.headers.get('Content-Length'))

data = self.rfile.read(length).decode()

fields = dict(x.split("=") for x in data.split("&"))

user_answer = fields.get("answer", "")

captcha_id = fields.get("captcha_id", "")

correct_answer = captcha_db.get(captcha_id, "")

self.send_response(200)

self.send_header("Content-type", "text/plain")

self.end_headers()

if user_answer == correct_answer:

self.wfile.write(b"CAPTCHA passed")

else:

self.wfile.write(b"CAPTCHA failed")

del captcha_db[captcha_id]

HTTPServer(("", 8080), Handler).serve_forever()Dynamic Content

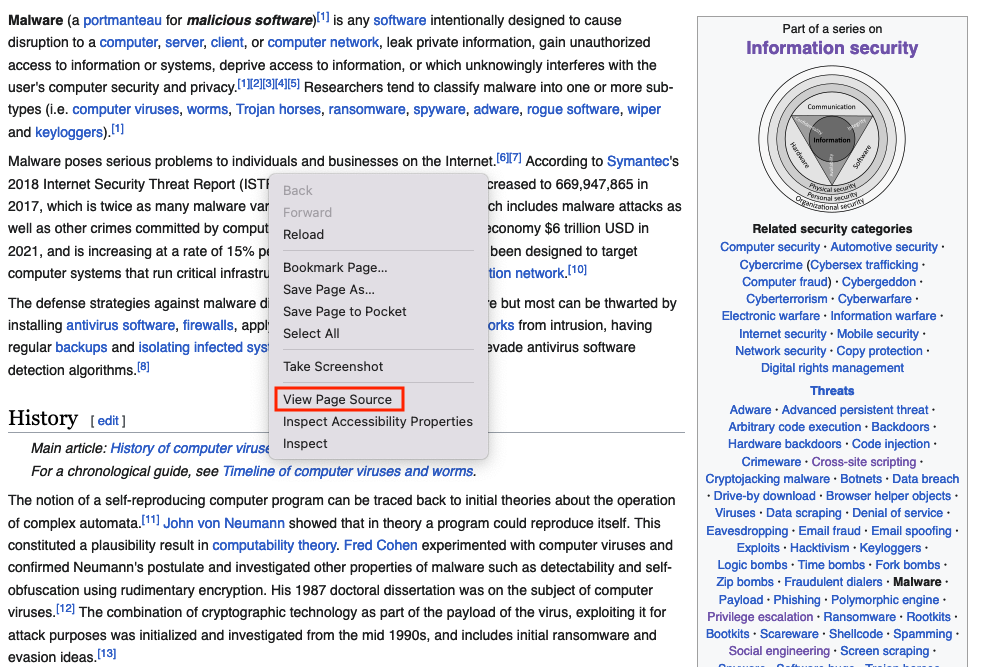

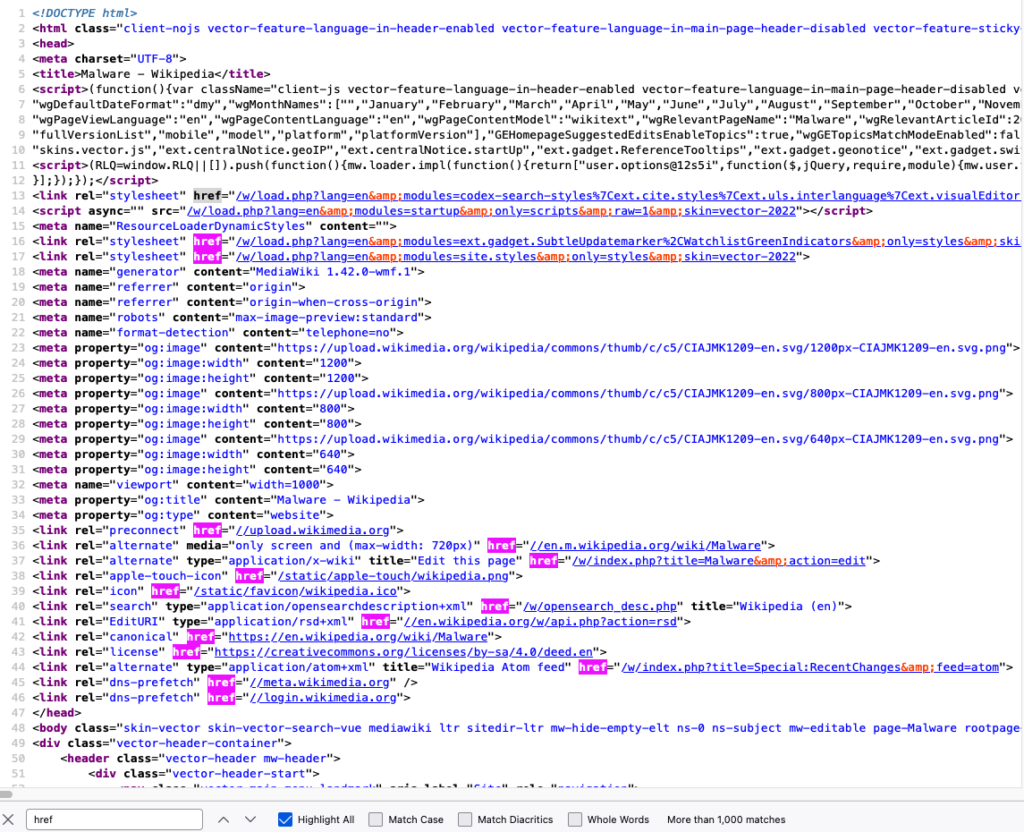

Dynamic content is generated at runtime rather than being fixed in the HTML source. This often involves JavaScript rendering, API calls, or asynchronous data loading. Since the content is not directly present in the initial page source, simple HTML-only scraping tools cannot easily extract the data without simulating a real browser environment.

from http.server import BaseHTTPRequestHandler, HTTPServer # HTTP server framework

from datetime import datetime # used to generate dynamic runtime timestampclass CustomHandler(BaseHTTPRequestHandler): # request handler class

def do_GET(self): # handle GET requests

if self.path == “/”: # main webpage route

self.send_response(200) # HTTP 200 OK

self.send_header(‘Content-type’, ‘text/html’) # response is HTML page

self.end_headers() # finish headers

self.wfile.write(b”””

<html>

<body>

<h1>Server Running…</h1>

<div id=”data”>Loading…</div>

<script>

setTimeout(() => { // wait 10 seconds before loading data

fetch(“/data”) // request dynamic backend endpoint

.then(r => r.text()) // convert response to text

.then(t => document.getElementById(“data”).innerText = t); // update page content

}, 10000); // 10000ms delay (10 seconds)

</script>

</body>

</html>

“””)

return # stop processing this requestif self.path == “/data”: # dynamic data endpoint

self.send_response(200) # HTTP OK

self.send_header(‘Content-type’, ‘text/plain’) # plain text response

self.end_headers() # finish headers

self.wfile.write(f”Dynamic Content Loaded: {datetime.now().strftime(“%m-%d-%Y %I:%M %p”)}”.encode()) # write the dynamic content

return # end request

HTTPServer((“”, 8080), CustomHandler).serve_forever() # start server on port 8080

from http.server import BaseHTTPRequestHandler, HTTPServer

from datetime import datetime

class CustomHandler(BaseHTTPRequestHandler):# request handler class

def do_GET(self):

if self.path == "/":

self.send_response(200)

self.send_header('Content-type', 'text/html')

self.end_headers()

self.wfile.write(b"""

<html>

<body>

<h1>Server Running...</h1>

<div id="data">Loading...</div>

<script>

setTimeout(() => { // wait 10 seconds before loading data

fetch("/data") // request dynamic backend endpoint

.then(r => r.text()) // convert response to text

.then(t => document.getElementById("data").innerText = t); // update page content

}, 10000);// 10000ms delay (10 seconds)

</script>

</body>

</html>

""")

return

if self.path == "/data":

self.send_response(200)

self.send_header('Content-type', 'text/plain')

self.end_headers()

self.wfile.write(f"Dynamic Content Loaded: {datetime.now().strftime("%m-%d-%Y %I:%M %p")}".encode())

return

HTTPServer(("", 8080), CustomHandler).serve_forever()Randomized Identifiers

Websites often change element IDs, class names, or API endpoints dynamically. This prevents scrapers from relying on fixed selectors to locate data. For instance, a product price element might have a different ID each time the page loads. This forces scrapers to constantly adapt and makes automation less reliable.

from http.server import BaseHTTPRequestHandler, HTTPServer # import HTTP server classes

from random import randint # used to generate random IDsclass CustomHandler(BaseHTTPRequestHandler): # define request handler

def do_GET(self): # handle GET requests

self.send_response(200) # send HTTP 200 OK status

self.send_header(‘Content-type’, ‘text/html’) # response is HTML

self.end_headers() # finish headers

random_id = f”id_{randint(1000,9999)}” # generate random element ID each request

# send HTML response to client

self.wfile.write(f”””

<html>

<body>

<div id=”{random_id}”>Gas Price is: $5.99 per gallon</div>

</body>

</html>

“””.encode())HTTPServer((“”, 8080), CustomHandler).serve_forever() # start server on port 8080

from http.server import BaseHTTPRequestHandler, HTTPServer

from random import randint

class CustomHandler(BaseHTTPRequestHandler):

def do_GET(self):

self.send_response(200)

self.send_header('Content-type', 'text/html')

self.end_headers()

random_id = f"id_{randint(1000,9999)}"

self.wfile.write(f"""

<html>

<body>

<div id="{random_id}">Gas Price is: $5.99 per gallon</div>

</body>

</html>

""".encode())

HTTPServer(("", 8080), CustomHandler).serve_forever()User Behavior Analysis

User Behavior Analysis technique focuses on analyzing how users interact with a website over time. Typical human behavior includes pauses, scrolling, clicks, and irregular timing, while bots tend to generate consistent, fast, and repetitive request patterns. Websites use machine learning or rule-based systems to detect anomalies, such as extremely fast navigation, identical click paths, or repetitive page access patterns, and subsequently restrict or block suspicious activity.

Honeypots

Honeypots are hidden elements embedded in a webpage that are either invisible or irrelevant to normal users (such as hidden links or form fields). Bots that blindly follow all available elements may end up interacting with these traps. Once triggered, the system can flag the behavior as automated and take action such as blocking the IP address, logging the activity, or redirecting the user.